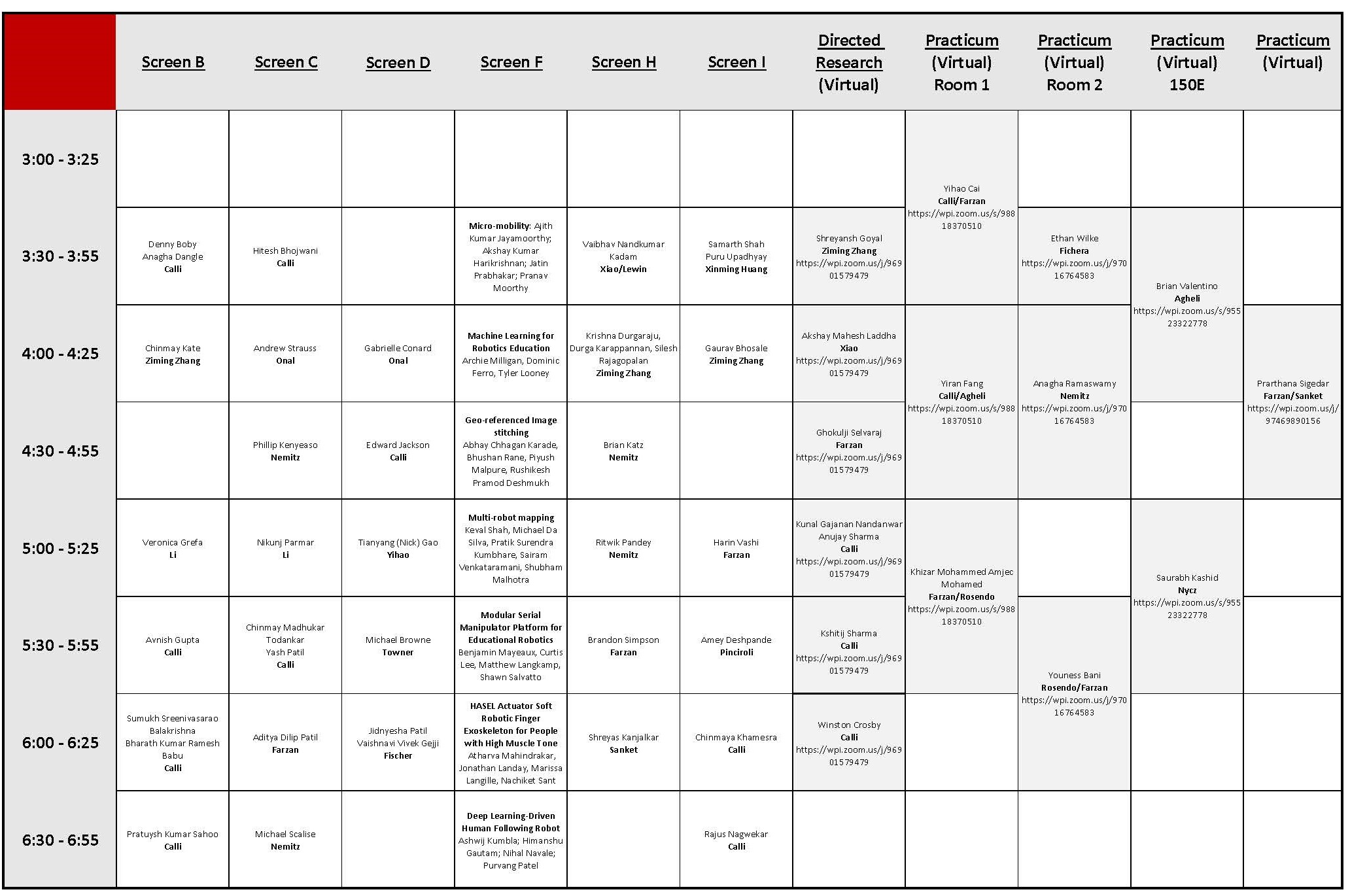

RBE Capstone Presentation Showcase

3:30 p.m. to 7:00 p.m.

UH 500 - Screen B

(3:30 - 3:55) Real-Time Grasp Selection And Grasp Planning for Dexterous Picking

Team Members: Denny Boby and Anagha Dangle

Abstract: This project aimed to develop a deep learning-based approach to enable a robot to autonomously select the best dexterous manipulation skill for a given picking instance. A large-scale dataset of labeled images was generated, consisting of various objects in both single and cluttered configurations. The dataset was used to train a Convolutional Neural Network (CNN) to recognize and classify the objects based on their geometries, pose, and environment properties, and map each object to one of the six manipulation skills. At runtime, the trained CNN was utilized to determine the most appropriate manipulation skill to use. The effectiveness of the approach was evaluated through demonstrations conducted on a Franka-Emika Panda arm equipped with an open-source underactuated gripper.

Advisor: Professor Berk Calli, Worcester Polytechnic Institute (WPI)

(4:00 - 4:25) Semantic Segmentation of 3D Lidar Points cloud for Autonomous Driving in Real time

Chinmay Kate

Abstract: Scene Understanding is essential prerequisite in Autonomous driving. Semantic segmentation helps gaining rich understanding of scene by predicting labels of individual sensory data points. In this research Rangenet++ architecture is utilized to semantically segment rich semantics by projecting 3D Lidar point clouds onto range images. Also, implemented pixel shuffle at the bottleneck which gradually reduced the total number of parameters used resulting accurately segmented 3D point clouds at frame rate.

Advisor: Professor Ziming Zhang, Worcester Polytechnic Institute (WPI)

(5:00 - 5:25) Improving Human-Robot Interaction for Shared Control and Enhanced Spatial Awareness in a Dual-Arm Nursing “Gopher” Robot

Veronica Grefa

Abstract: This project presents improvements made to the human-robot interaction (HRI) system of the dual-arm nursing robot "Gopher-in-Unity" simulation developed by the Human-Inspired Robotics (HIRO) lab. The enhancements aim to enable shared control between the human and robot, where they collaborate in providing control inputs based on their respective strengths for given tasks. Furthermore, the modifications aim to increase trust and transparency by enabling effective communication between the human and robot during navigation, obstacle avoidance, and object manipulation. Finally, the enhancements also aim to improve the operator’s spatial awareness during manual teleoperation in confined spaces or corners. Overall, these improvements aim to enhance the performance of the nursing robot in healthcare environments where safe and effective collaboration between human and robot is crucial.

Advisor: Professor Jane Li, Worcester Polytechnic Institute (WPI)

(5:30 - 5:55) Active Vision for Robotic Grasping

Avnish Gupta

Abstract: Robotic grasping algorithms often use a single image of an object, which limits their success because they rely heavily on the camera’s viewpoint. Active vision allows robots to control their sensors’ movements, enabling them to collect information intelligently and interact with unknown objects in uncertain conditions. Our project aims to find the most efficient next viewpoint for vision-based grasping by designing and implementing two algorithms and testing them on a benchmark object set. We compared their performances to each other, as well as to optimal and random strategies, to draw conclusions about challenging objects and promising grasping approaches.

Advisor: Professor Berk Calli, Worcester Polytechnic Institute (WPI)

(6:00 - 6:25) Benchmarking Vision Based Grasping Algorithm

Team Members: Sumukh Sreenivasarao Balakrishna, Bharath Kumar Ramesh Babu

Abstract: This project compares two learning-based and two analytical-based grasp detection algorithms using a benchmarking module and a simulator. The analytical algorithms were significantly improved to enhance their performance, while the learning algorithms were used as-is. Our findings indicate that analytical algorithms outperform learning algorithms in constrained environments, while learning algorithms may be more suitable for unstructured environments. These results can aid in selecting the appropriate algorithm for a specific application.

Advisor: Professor Berk Calli, Worcester Polytechnic Institute (WPI)

(6:30 - 6:55) Design and Implementation Of a Scrap Metal Cutting Robot

Pratuysh Kumar Sahoo

Abstract: Metal cutting is an essential process in several industries, requiring high levels of accuracy and precision. As a result, researchers have been focusing on developing advanced cutting technologies, including the use of robots. Metal cutting obots offer numerous benefits, such as increased efficiency, accuracy, and safety, making them a popular research area. In this paper, we present a comprehensive study of the design and implementation of a metal cutting robot. Our research empha-sizes critical design factors, such as selecting the appropriate type of robot, cutting tool, and control system. We also address the challenges encountered during the implementation process and suggest solutions to overcome them. Our findings show that using a metal cutting robot in industrial applications is both practical and effective. This work shows the design of an autonomous metal cutting robot that works on visual feedback.

Advisor: Professor Berk Calli, Worcester Polytechnic Institute (WPI)

UH 500 - Screen C

(3:30 - 3:55) Vision based grasping

Hitesh Bhojwani

Abstract: This project presents a comparison between an analytic vision based grasping algorithm and learning based grasping approaches on YCB objects and Cornell dataset in simulation. The analytical algorithm finds out all feasible grasps using depth image from a realsense camera in eye-in-hand configuration and then filters them using multiple grasp metrics such as distance from the centroid, curvature of the object, etc. to find the best grasp candidate. To run evaluation experiments, Gazebo simulation environment and franka Emica Panda arm were used.

Advisor: Professor Berk Calli, Worcester Polytechnic Institute (WPI)

(4:00 - 4:25) 3D Force Sensing Module

Andrew Strauss

Abstract: In a robotic system it is often the case that there are some aspects of the robot that are unobservable. Many robots take advantage of closed loop control to accomplish tasks since it removes the robot’s reliance on dead reckoning and allows the robot to be more autonomous and reliable. In this paper we will discuss a new type of 3D force sensor that can be mounted easily most anywhere on a robot and provide feedback to the device it is integrated into. We will also cover one of the possible applications by showing off an optimized robotic hand with many of these integrated force sensors within. This paper will only cover the design, manufacturing, and construction of this hand. The utilization of its features is a good place for future work to begin.

Advisor: Professor Cagdas Onal, Worcester Polytechnic Institute (WPI)

(4:30 - 4:55) Magnetic Signaling through Ferrofluids

Phillip Kenyeaso

Abstract: Sensing is a key component of any robot, this project aimed to analyze ferrofluid’s properties to increase magnetic field strength for magnetic signaling across wearable haptics and other devices. Using the change in magnetic field strength read from the ferrofluid, one can determine the length and/or angle of the ferrofluidic channel. Tubes and vials housing a commercially available ferrofluid from ferrotec, were attached to neodymium magnets. Readings indicated that the ferrofluid can extend the range for magnetic signaling, highlighting the possibility of adding more compliant mechanisms to robots or wearable devices.

Advisor: Professor Markus Nemitz, Worcester Polytechnic Institute (WPI)

(5:00 - 5:25) AR + LEGO Robots: Interactive Play and Embodied Storytelling

Nikunj Parmar

Abstract: This project presents a novel interactive and embodied story-telling robot system that integrates an advanced augmented reality device (Hololens) with customizable Lego robots. The system uses the camera on Hololens to detect text and pictures on a physical book page and reads it to the human audience in interactive play. Two motorized Lego robots generate expressive body motions and verbal conversation to interact with the audience based on the story content and pictures. The Hololens can be used for gaze tracking, detecting and recognizing the customized Lego creatures, and generating content-relevant conversations and activities to interact with the human audience.

Advisor: Professor Jane Li, Worcester Polytechnic Institute (WPI)

(5:30 - 5:55) Vision-based Framework for Fully-autonomous Oxy-fuel Metal Cutting

Team Members: Chinmay Madhukar Todankar and Yash Patil

Abstract: In sectors such as manufacturing and metal recycling, automating the industrial process of metal cutting would yield substantial improvements to productivity and safety. We propose a vision-based framework to fully automate metal cutting based on oxy-fuel torches, a cutting technology widely used in industry. Our framework enables a robot equipped with a cutting torch and an eye-in-hand RGB camera—to autonomously execute a cut along a given cutting path. We achieve this in three automated tasks: vision system calibration, metal surface conditioning, and torch combustion control. The visual feedback is obtained from the camera observing the torch flame and heated region behind a tinted visor. These image frames are processed to extract meaningful features such as the heat pool’s convexity and intensity. Using these features, we model the desired conditions for visual calibration, surface conditioning, and combustion control. During calibration, the vision system is configured to the current scene and setup by detecting the torch flame’s centroid and its baseline intensity. Afterwards during conditioning, the metal surface is preheated to adequate conditions for initiating combustion. Finally, during control, the torch is moved at an adequate cutting speed by visually observing the metal surface’s heated region and maintaining desired combustion conditions. We evaluate our framework in physical cutting experiments using a 1-DOF robot that autonomously cuts steel plates of different thicknesses. Our system successfully executes the cuts relying purely on vision without prior knowledge of the plate thicknesses.

Advisor: Professor Berk Calli, Worcester Polytechnic Institute (WPI)

(6:00 - 6:25) Design of Robust Adaptive Controller for Quadrotor UAVs for Aerial Drone Delivery

Aditya Patil

Abstract: Quadrotors have become increasingly popular for drone delivery because of their easy accessibility to areas with heavy traffic and difficult terrain. Their ability to hover and maneuver in tight spaces make them as one of the top candidates for the last mile delivery in urban areas. This research presents the design of control algorithm for aerial drone drop delivery service, which is robust to uncertain flight disturbances and rapidly adapts to changes in model parameters. It finds its potential application in quadrotor UAVS used for transportation and logistics, where paylaod of unknown mass needs to be carried across and dropped to the target location. The proposed control algorithm is a two-layer robust adaptive controller. The outer later specifies a quadratic program (QP) to optimize the control signal subject to a Rapidly Exponentially Stabilizing Control Lyapunov Function (RES-CLF) constraint to guarantee trajectory tracking. The inner layer employs an adaptive learning law to estimate the model uncertainties which are parameterized by Radial Basis Function. To ensure the stability of the system, a Lyapunov-based stability analysis is carried out. The controller was tested on 3DR Iris drone platform and the results show that the controller is effective in stabilizing the quadrotor while maintaining accurate trajectory tracking and payload estimation, even in the presence of significant disturbances and uncertainties.

Advisor: Professor Siavash Farzan, Worcester Polytechnic Institute (WPI)

(6:30 - 6:55) Fluidic Logic: Vacuum Actuator, controller, and sensor (VACS)

Michael Scalise

Abstract: This study introduces a design strategy for embedding fluidic controllers and sensors directly into fluidic actuators, specifically vacuum-based actuators with embedded fluidic control and sensing (VACS). VACS consist of linear actuators that interrupt fluid flow when actuated, acting as a NOT gate. The author presents VACS as oscillatory circuits used in various applications such as walking, crawling, and swimming robots, which can be 3D-printed using standard FDM printers. The method allows for the integration of control and sensing elements into soft robots by design, facilitating the development of vacuum-based soft robots that have embedded control and actuation while being scalable, easy to fabricate, requiring fewer assembly steps, and inexpensive. The study shows promise in lowering the cost and time required for manufacturing soft robots while imparting structural intelligence into robots.

Advisor: Professor Markus Nemitz Worcester Polytechnic Institute (WPI)

UH 500 - Screen D

(4:00 - 4:25) Design and Dynamic Modeling of an Origami-Based Continuum-Spined Robot

Gabrielle Conard

Abstract: Biologically-inspired robots have the potential to maneuver in spaces inaccessible to traditional wheeled mobile robots or even human workers. Increasingly in academia, researchers have been incorporating soft materials or elements into their robot designs, especially soft spines, to mimic the flexibility and adaptability of their biological counterparts. However, soft robots are notoriously difficult to model, as the very factor that makes them adaptable, their softness, also endows them with unpredictable behavior. In addition, untethered mobile robots are rarer in soft robotics than in traditional rigid robotics due to a reliance on pneumatic or hydraulic actuation, which limits the usefulness of soft robotics in the real world. To meet these challenges, our lab has been developing several soft-hybrid robots that employ cable-driven origami-based continuum structures for their spines, allowing for untethered operation and repeatable behavior. In this presentation, I will discuss my contributions to the systems-level design process of adapting one of these robots into an inspection robot in collaboration with the City of Worcester. I will also present a simple dynamic model of the origami structure based on traditional rigid mechanisms with the goal of simulating the entire soft-hybrid robot system and performing control system design in the future.

Advisor: Professor Cagdas Onal, Worcester Polytechnic Institute (WPI)

(4:30 - 4:55) Holistic Hybrid Mobile Manipulator Control

Edward Jackson

Abstract: This letter details the design of a reactive hybrid mobile manipulator based on the implementation of Design and Implementation of a Mobile Manipulator MQP. This work is heavily based on A Holistic Approach to Reactive Mobile Manipulation by Haviland et al. Their approach to treating the mobile base and robot arm as a single system in a motion controller enabled smooth/graceful motion that achieves a desired end effector pose and avoids joint position/velocity limits while maximizing Yoshikawa’s Manipulability index. This project expands their work by incorporating three additional degrees of freedom (DoFs) in a parallel manipulator elevator between the mobile base and the robotic arm to create a 12-DoF system. We demonstrate and compare various implementation methods to incorporate the elevator through simulation in ROS1/ Gazebo. Additionally, we discuss the homogenous Power Manipulability as a promising alternative manipulability index to restructure the motion controller algorithm.

Advisor: Professor Berk Calli, Worcester Polytechnic Institute (WPI)

(5:00 - 5:25) A Robotic Platform for At-Home Ultrasound Diagnostic Imaging

Tianyang (Nick) Gao

Abstract: Continuing a project development of a portable teleoperated robot for ultrasound diagnostic imaging, the design of the device was further finalized. Last year’s work included completing the device’s design and conducting human testing, which highlighted the importance of this telemedicine diagnostic tool, particularly during the COVID-19 pandemic. The device is a 6-degree-of-freedom teleoperated robot paired with a third-party portable ultrasound probe. To enhance safety, the device was equipped with a compliant contact, a safety enclosure, a new electrical system, and an emergency switch. This year, with a sonographer’s advice, an intuitive ultrasound probe-shaped 6 DOF 1 to 1 motionmapped controller was implemented. Furthermore, feedback control and emergency switch improvements have been implemented as well. The telemedicine tool has the potential to improve medical care access and reduce infection transmission risks. Further testing will be conducted to evaluate the device’s new features.

Advisor: Professor Zheng Yihao, Worcester Polytechnic Institute (WPI)

(5:30 - 5:55) Optimizing Automated Manufacturing Processes Using Axiomatic Design Methods

Michael Browne

Abstract: Automating industrial manufacturing processes is a task that is often easier said than done. Due to emergent behaviors in both the end item being assembled, and in the robotic assemblers themselves, it is not uncommon for a change in one aspect of the design of the combined system (product + robot assembling the product) to have unintended impacts in seemingly unrelated areas of the overall system. These emergent behaviors are usually the result of poor or incomplete mapping of all the interactions between all the characteristics of the system. However, the axiomatic method provides the tools necessary to not only begin map these interactions, but to also confirm that all the requirements of the system have been met and are organized in an optimal way. The objective of this paper is to objectively analyze a hypothetical automated manufacturing environment, and all the aspects of its design that will be necessary for it to succeed in its mission of generating profit for the company that operates it. Currently, factories are often designed after-the-fact, after a product has been developed, and all manufacturing processes are tailored to suit it. Any defects or inefficiencies in a process are dealt with reactively, after they have already had a financial impact on the company. Instead, this paper proposes designing product and manufacturing process concurrently by utilizing the axiomatic design method, and that by doing so, it becomes possible for interactions to be fully mapped and understood before anything product or manufacturing tools — is built. By doing things this way, this paper shows that it then becomes possible to better utilize available robotic manufacturing tools & processes.

Advisor: Professor Walter Thomas Towner, Worcester Polytechnic Institute (WPI)

(6:00 - 6:25) Face Tracking and Behaviour Inference from Pose for PABI

Team Members: Jidnyesha Patil and Vaishnavi Vivek Gejji

Abstract: The goal of this research was to derive basic inferences of behaviour of the subject in an ABA therapy session. The major aim is to track the head of the subject throughout and detect nervousness so the therapy session can be adjusted accordingly. Facial recognition was done using FaceNET with 100% accuracy. Another approach using PCA with eigen faces achieved 97% accuracy with a reduced computational cost.The pose was detected using Media Pipe pipeline and classified into two basic classes: ’studying’ & ’looking here and there’. Behavioral cues were taken from the pose detected to trigger calming exercises.

Advisor: Professor Greg Fischer, Worcester Polytechnic Institute (WPI)

UH 500 - Screen F

(3:30 - 3:55) Stability of micro-mobility platform

Team Members: Ajith Kumar Jayamoorthy, Akshay Kumar Harikrishnan, Jatin Prabhakar, Pranav Moorthy

Abstract: As defined by the Institute of Transportation and Policy ”Electric micro-mobility refers to electric powered modes of transportation that are low speed (compared to bicycle), small, lightweight, and typically used for short distance trips. These include primarily electric bicycles and standing e-scooters, but also small electric devices, which can be shared or personally owned.” Micro-mobility platforms hold the advantage that they are cheap and have a low volumetric and energy footprint, which ensures that a person can get to their destination in the most efficient and economical manner. Such micro-mobility platforms though very useful, especially in high population density centers are inherently unstable and supermaneuverable in nature, due to which it is required that the user operating such devices have considerable skill and focus. Due to this, they are considered to be unsafe for day-to-day transit for the masses. But at the same time such technology holds great potential to reduce pollution and congestion in population centers, which warrants a study into overcoming some of the shortcomings of such devices. With this project, an attempt will be made to design and implement an active balancing control system that will enhance user drive experience and safety.

(4:00 - 4:25) Machine Learning for Robotics Education

Team Members: Archie Milligan, Dominic Ferro, Tyler Looney

Abstract: Undergraduate students within the Robotics Engineering Department at Worcester Polytechnic Institute do not get enough exposure to growing industry focuses such as Artificial Intelligence and Machine Learning. We developed a curriculum that exposes students to applications, limitations, and implementations of machine learning, through the use of neural networks. We guided curious students through a workshop to allow them to experiment with neural networks on a Romi robot that is trained to follow a line. By the end of the workshop, all of the remaining student participants had successfully programmed, trained, and tested their neural networks on the Romi robots. Over 83% of participants felt their understanding of artificial intelligence and machine learning had improved and felt that the three hour duration of the workshop was sufficient to complete the lab activities. We surveyed alumni of the WPI Robotics Engineering Program and found that 43% stated that having a basic understanding of these concepts would benefit their industry experiences, illustrating that a curriculum such as ours would benefit students after graduation. With our curriculum, student participants were able to learn the applications, limitations, and implementations of machine learning through the use of neural networks and learn that neural networks are not a solution to every problem.

(4:30 - 4:55) Geo-referenced Image Stitching

Team Members: Abhay Chhagan Karade, Bhushan Rane, Piyush Malpure, Rushikesh Pramod Deshmukh

Abstract: In this project, we present a sequential image stitching and geographic data embedding pipeline. Unlike the approach of collecting all the images and then matching based on common features, we perform sequential image stitching on the fly. We interpolate and warp the GIS data associated with every image using the homography matrix generated during image stitching to obtain the canvas of longitude and latitude data associated with every pixel. To handle the heavy computational power requirements, we adopt a sliding window approach. This pipeline can be executed in parallel, and online live data can be used for image processing and geo-tagging. The presented image stitching pipeline can be used to maintain and use the visual record of work performed by robots in a single canvas with geographic data associated with every pixel coordinate of the stitched image. We have targeted the application of agricultural robots, where undetected weeds need to be searched and removed manually. In such cases, our pipeline would assist the robot operator in obtaining the current map of the farm field, and by using the geographic data of the left-out weed, the operator can send the robot to remove it.

(5:00 - 5:25) Multi-robot mapping

Team Members: Keval Shah, Michael Da Silva, Pratik Surendra Kumbhare, Sairam Venkataramani, Shubham Malhotra

Abstract: Mapping the surrounding environment is a key task in the exploration of unknown terrains. This problem of exploration of an unknown terrain breaks down into creating a map of the surroundings where a GPS system is not available, like in the Mars exploration. With this idea as our founding stone, our project builds on top of it to develop a multi-robot-based mapping system. The mapping is performed by not only two different ground robots but by two different sensors to mimic a real-time exploration scenario where the breakdown of a robot or a sensor becomes a concern. The system is developed beginning with a collection of outdoor data, on an earthly surface (flatness assumed) within one of the buildings of the Worcester Polytechnic Institute campus. A TurtleBot3 and a Create3 robot are deployed for the tasks. The sensor system comprises a LiDAR that accompanies the TurtleBot3 and a Zed2 stereo camera mounted on Create3. Upon completion of the data collection, we proceed with map merging to produce a final map.

(5:30 - 5:55) Modular Serial Manipulator Platform for Educational Robotics

Team Members: Benjamin Mayeux, Curtis Lee, Matthew Langkamp, Shawn Salvatto

Abstract: We propose a modular serial manipulator platform for educational use. This platform is designed to be easily accessible for educators and students, enabling curricula from middle school up through a graduate level. Through reconfigurability, this single platform can give experience with different serial manipulators such as 2R, 3R, and 6 DoF. The low cost and a modular framework allows for a single kit to be used over multiple courses. The platform identifies its configuration through electro-mechanical connections and creates an internal model relating to its kinematics and dynamics. This system significantly reduces the time and money spent assembling and maintaining classroom robotic infrastructure.

(6:00 - 6:25) HASEL Actuator Soft Robotic Finger Exoskeleton for People with High Muscle Tone

Team Members: Atharva Mahindrakar, Jonathan Landay, Marissa Langille, Nachiket Sant

Abstract: There is a need for variable stiffness in soft robotic systems to provide additional properties. Soft robotic systems could benefit from increasing force transmission to the environment while maintaining soft properties or creating configurations that the system can morph into. One specific problem that could be mitigated through the development of a variable stiffness system is muscle atrophy and bone degradation when using exoskeletons. To mitigate atrophy while still providing mobility assistance, a small-scale rehabilitation exoskeleton will be developed for a finger. Rigid exoskeletons take on most if not all, load-bearing for extremities. If the bone is not continuously loaded and subjected to compressive and shear forces, it breaks down. Additionally, if the muscle is not regularly used, it will atrophy. A soft exoskeleton with variable stiffness could allow for required load bearing and muscle engagement, while still providing the necessary support and joint actuation. A secondary problem this project aims to address is the need for effective untethered soft actuators. Current actuators commonly used for soft robotics are pneumatic and hydraulic. These actuators are bulky and not ideal for most soft robotic applications. A potential solution is adapting a hydraulically amplified self-healing electrostatic (HASEL) actuator. There has already been extensive research on developing a HASEL actuator for soft robotics in the Keplinger Labs at UC, Boulder. These actuators transform electrical energy to mechanical energy by changing the shape of the actuator depending on the constraint layers around a liquid dielectric pouch. To achieve meaningful actuation in an untethered system, a non-pneumatic and non-hydraulic actuator must be developed. The goal of the project is to create a small-scale rehabilitation exoskeleton with variable stiffness.

(6:30 - 6:55) Deep Learning-Driven Human Following Robot: Detection, Tracking, and Navigation

Team Members: Ashwij Kumbla, Himanshu Gautam, Nihal Suneel Navale, Purvang Patel

Abstract: Mobile robots have seen an unprecedented growth in the application areas ranging from voyaging extreme depths of ocean to expanding the horizons of deep space exploration. However, they are still facing challenges when it comes to operating alongside humans due to highly dynamic human behaviour and difficult integration of these behaviours into robot control. An increase in deep learning based computer vision algorithms can provide new solutions to widen the applications of mobile robots in the presence of humans. This project proposes a unique solution that incorporates a human to drive a robot autonomously in any given environment. The main idea is to capture a desired person required to track and follow, save the corresponding features in the system. When a person enters the frame again, the feature of this person is compared with the saved feature to identify the subject of interest. Then the person is tracked to generate the desired trajectories to follow. Finally, a motion planner is used to make the robot follow these generated trajectories. The proposed solution is a framework that can be applied to ground based as well as aerial robots, however, considering the rigorous timeline, we have developed the solution specific for ground based robots.

UH 500 - Screen H

(3:30 - 3:55) Dig Safe Autonomous Cable Detection Robot

Vaibhav Nandkumar Kadam

Abstract: Dig Safe regulations require utility companies to mark the location of buried utilities prior to the start of a construction project. Detecting and marking cables is time consuming, monotonous, and can be dangerous to utility workers due to the working environment. The goal of this project is to develop an autonomous robotic system that detects, and marks buried electrical cables safely and efficiently. We present the development of a Navigation stack for the robot that focuses on estimating buried cable path from noisy sensor data and path tracking algorithms for following the detected cable along with integration of GIS based power cable data for guidance and navigation.

Advisors:

Professor Jing Xiao, Worcester Polytechnic Institute (WPI)

Professor Greg Lewin, Worcester Polytechnic Institute (WPI)

(4:00 - 4:25) Performance Evaluation of Deep Key Point Detectors for 3D Point Cloud

Team Members: Durga Karappannan, Krishna Durgaraju, Silesh Rajagopalan

Abstract: 3D keypoint detection is an essential task in computer vision, with applications in areas such as 3D reconstruction, object recognition, SLAM, and pose estimation. There are a number of different methods for detecting 3D keypoints, each with their own advantages and disadvantages. In this paper, we have reviewed state of art 3D keypoints detection methods and classification. Finally presenting a comprehensive analysis and comparing each key point method performance with respect to repeatability and point cloud registration of various learning-based key point detector methods in the domain of computer vision. Solving the challenge of picking the right key point detector for ones requirements with the rise of deep learning-based detectors and descriptors. This project helps in the selection of the right learning based key point detector with extensive performance evaluation and thorough comparison based on reliable and robust metrics.

Advisor: Professor Ziming Zhang, Worcester Polytechnic Institute (WPI)

(4:30 - 4:55) Evaluating Current Consumer Multi-Material FDM 3D Printing Technologies

Brian Katz

Abstract: The introduction of multi-material printing allows for the creation of integrated components and assemblies, increasing part complexity while decreasing the number of parts and assembly time. While industrial solutions to multi-material printing feature incredible accuracy, they are often limited in material selection and are challenging to acquire due to their high cost. However, several consumer technologies exist with unique actuation mechanisms to change filament, such as filament swapping, filament joining, and multiple extruder mechanisms and make use of readily available commercial filaments. In this study, four products: the Prusa MMU2S, Mosaic Palette 3 Pro, Ultimaker 3S, E3D Toolchanger + Motion System are characterized on the basis of print quality and ease of use to determine the most suitable consumer multi material technology

Advisor: Professor Markus Nemtiz, Worcester Polytechnic Institute (WPI)

(5:00 - 5:25) Towards Soft, Printed, Wireless Sensors for Soft Robotic Applications

Ritwik Pandey

Abstract: The popularity of soft robots has led to a need for effective monitoring of their motion and the surrounding environment. However, the integration of traditional sensing methods has proven to be challenging, with researchers resorting to selectively rigidising sections of the soft robot to house the sensors. Furthermore, the number of wired connections required to acquire data from the sensors severely limits the sensor density. To address these issues, this piece of research proposes a suite of soft, wireless (RFID) printed sensors to replace traditional sensors. Various printing methods, including screen printing, direct ink write (DIW), and stencil printing were utilised to explore different sensor designs such as planar and layered, resistive and capacitive, to measure both strain and normal force. The study investigated cyclic strain, repeated force, and proximity-related interactions, and analysed their outcomes. The suitability of the sensors for chemical and moisture sensing, and their RFID integration potential are yet to be explored. Overall, the study yielded promising results with functional screen-printed strain sensors and DIW force sensors, indicating the need for further exploration to realise fully printed wireless sensors.

Advisor: Professor Markus Nemtiz, Worcester Polytechnic Institute (WPI)

(5:30 - 5:55) Simulation and Control of The OpenManipulator X within MATLAB and Simulink to Aid The Robotics Curriculum

Brandon Simpson

Abstract: The OpenManipualtor X is a four degree of freedom robotic arm designed for use in educational and research environments. This project aims to explore its capabilities through simulation and control within MATLAB and Simulink. Additionally, this research project is aimed at contributing to the development of the robotics curriculum by helping explore the capabilities of the OpenManipulator X. The expected outcomes of this research project include a comprehensive understanding of the capabilities of the OpenManipulator X, as well as a set of Simulink models that can be used to replicate its behavior on the hardware. The project’s findings will contribute to the development of the robotics curriculum by providing practical experiences with robotics systems, controls, and simulation tools.

Advisor: Professor Siavash Farzan, Worcester Polytechnic Institute (WPI)

(6:00 - 6:25) Dynamically Dancing Drones

Shreyas Kanjalkar

Abstract: Recently, swarm robotics has emerged as a promising research area, leveraging the power of decentralized multiagent systems to solve complex tasks across various domains. Drawing inspiration from natural swarms, such as bees, ants, and birds, these systems enable enhanced flexibility, robustness, and scalability compared to traditional single agent robotic solutions. This paper introduces a novel approach for applying swarm robotics principles to dynamic drone performances, where drones not only interact with each other but also synchronize their movements with human dancers. By addressing the challenges of coordination, collision avoidance, and real-time adaptation, our proposed Planning and Perception algorithm showcases the potential of swarm robotics in the realm of entertainment and beyond. Furthermore, this research contributes to the broader understanding of swarm behavior and its practical applications, paving the way for innovative solutions in fields such as search and rescue, environmental monitoring, and transportation.

Advisor: Professor Nitin Sanket, Worcester Polytechnic Institute (WPI)

UH 500 - Screen I

(3:30 - 3:55) Motion planning and control for Autonomous Vehicle in CARLA simulator

Team Members: Samarth Shah and Puru Upadhyay

Abstract: The development of self-driving vehicles has seen significant advancements in recent years, revolutionizing the way we think about automotive transportation. These autonomous vehicles rely on four crucial decision-making modules: Route Planning, Behavior Planning, Motion Planning, and Trajectory Control, which work in tandem to ensure the safe and efficient navigation of the vehicle. This project aims to implement and integrate all four decision-making modules to achieve autonomous driving capability in the CARLA simulator. The Route Planning module creates a global route, while the Behavior Planning module makes decisions regarding various maneuvers such as lane following, tailgating, and overtaking. The Motion Planning module generates the vehicle’s trajectory, while the Trajectory Control module produces the required throttle and brake for trajectory tracking. The successful integration of these modules is demonstrated through simulations of highway and urban driving scenarios. Ultimately, the project highlights the potential of autonomous vehicles to reshape the future of transportation by improving efficiency, safety, and convenience.

Advisor: Professor Xinming Huang, Worcester Polytechnic Institute (WPI)

(4:00 - 4:25) Introducing Spatial Invariant Convolutions in Depth Completion

Gaurav Bhosale

Abstract: Depth Completion Networks are used in perception systems of robotic systems with low-resolution point clouds. LIDARS are still expensive and most small robots tend to rely on 16 or 32-laser resolution scanners. The output of these is only partially useful and many applications require higher resolutions. We introduce Spatial Invariant Convolutions in PeNet with the goal to improve the memory and latency of the system.

Advisor: Professor Ziming Zhang, Worcester Polytechnic Institute (WPI)

(5:00 - 5:25) Robust Motion Planning for Connected Non-Holonomic Agents using Voronoi Cells and Hybrid A*

Harin Vashi

Abstract: This project presents a novel approach to multi-agent motion planning for connected autonomous vehicles, which seamlessly integrates both the kinematic and dynamic constraints of individual agents. The proposed approach effectively generates feasible and executable trajectories while ensuring the method’s applicability and adaptability across a wide range of scenarios. The proposed method adopts the features of Voronoi Cells and Hybrid A* to ensure collision-free navigation of multiple agents in complex dynamic environments. By partitioning the space around the non-holonomic agents and planning the optimal path, the proposed method guarantees efficient navigation while maintaining safety. A deadlock avoidance strategy is proposed to add another layer of safety. We demonstrate the effectiveness and robustness of the approach in terms of collision avoidance and deadlock resolution using simulations in diverse, randomly generated environments. The results show that the proposed method outperforms existing methods. in terms of dynamic considerations and obstacle avoidance, making it a practical real-time motion planning approach

for connected non-holonomic agents in complex environments.

Advisor: Professor Siavash Farzan, Worcester Polytechnic Institute (WPI)

(5:30 - 5:55) State Prediction and Control for Swarm Robots using Graph Neural Networks (GNN) and Multi-Agent Reinforcement Learning (MARL) for Collective Transport!

Amey Deshpande

Abstract: The research focuses on using Graph Neural Networks and Graph attention to aid Multi-Agent Reinforcement Learning for swarm robots to carry out collective transport tasks. It utilizes the graph structure of the robot formation to learn a coordinated and collaborative policy to efficiently carry out the collective transport of an object.

Advisor: Professor Carlo Pinciroli, Worcester Polytechnic Institute (WPI)

(6:00 - 6:25) Ensemble Learning for Robot Grasping

Chinmaya Khamesra

Abstract: The project presents an Ensemble learning methodology for improving the performance of the robotics grasp synthesis algorithms. We trained an Ensemble convolutional neural network which uses the Mixture of Experts (MOE) model, which incorporates several existing methods as “experts” and combines them to deliver grasp judgment. On the Cornell Dataset, we measured the architecture’s performance using open-source algorithms like GRCNN, GGCNN, and a custom variation of GGCNN as experts from the literature. We found that the architecture has a 7.5% higher grasp success rate than the individual algorithms. The methodology offers a viable method for raising the success rate of robotics grasping tasks, which can be used for a wide variety of robotics domains. Our results suggest that considering the dataset diversity may significantly enhance the ensemble model performance

Advisor: Professor Berk Calli, Worcester Polytechnic Institute (WPI)

(6:30 - 6:55) Robot Waste Sorting System

Rajus Nagwekar

Abstract: Waste sorting is a crucial aspect of waste management systems as not all waste can be disposed of in the same manner. Thus it is vital to automate this process. We use 2 robotic arms along with RGBD cameras to rearrange the waste piles, identify key objects and sort them out.

Advisor: Professor Berk Calli, Worcester Polytechnic Institute (WPI)

UH 243 Room 1 - Virtual Practicum (Meeting ID: 988 1837 0510)

(3:00 - 3:55) Using 3D Semantic Instance Segmentation for Robotics pick-and-place

Yihao Cai

Abstract: Warehouse automation has gained significant attention in recent years due to the increasing demand for efficient and cost-effective order fulfillment in the era of e-commerce. Amongst the critical tasks is pick-and-place process which involves the retrieval and placement of various objects from storage locations to designated destinations. Computer Vision plays a crucial role in enabling robots to perform item-picking tasks with precision and efficiency. Most of the solution from industry standard is 2D-based when it comes to robot vision part like item detection, object segmentation. In this project, I tried leveraging the state-of-the-art 3D model (Mask3D) to evaluate the feasibility of applying 3D-based approach as alternative to solving issues using 2D-based methodology from industry solution. Further, I test the model on different kinds of collected dataset in multiple dimensions as the performance, robustness, scalibility. According to the result, Mask3D shows promising prospect for its application in object detection and segmentation during pick-andplace scenarios.

Advisors:

Professor Berk Calli, Worcester Polytechnic Institute (WPI)

Professor Siavash Farzan, Worcester Polytechnic Institute (WPI)

(4:00 - 4:55) RobotGPT: Programming the robot with natural language with ChatGPT

Yiran Fang

Abstract: During my internship at Orangewood Labs, ChatGPT emerged and brought great shocks to all areas of software technology. We came up with the idea that using the same generative AI techinics to help human program robotics arms, so that we can let users get rid of the long learning procedures for robot programming. Together with my team we build the first prototype project of RobotGPT, which can perform custom pick and place tasks with single RGBD camera.

Advisors:

Professor Berk Calli, Worcester Polytechnic Institute (WPI)

Professor Mahdi Agheli, Worcester Polytechnic Institute (WPI)

(5:00 - 5:55) Improving the Naida Humanoid robot for use in urban environment

Khizar Mohammed Amjec Mohamed

Abstract: The Nadia humanoid robot housed at IHMC is being developed to operate in urban environments. Some of the goals of the current research on Nadia include improving the action of opening doors, manipulating objects, clearing light and heavy debris and walking reliably on rough terrain. In its current state, multiple actuators on Nadia are powered using a stationary hydraulic unit and a stationary electric power supply. Nadia currently also possesses electric actuators with planetary gearboxes on its arms. In order to meet the above mentioned research goals, a new hydraulic and electric power supply will be directly mounted on the robot. The gearbox on the robotic arms will also be replaced with cycloidal drive actuators to reduce backlash and increase power to weight ratio. With regards to the new hydraulic unit, I designed transmissions for a hydraulic pump and other miscellaneous parts. I also integrated cycloidal actuators in the forearm of the robot and designed parts for cable routing and output shafts.

Advisors:

Professor Andre Rosendo, Worcester Polytechnic Institute (WPI)

Professor Siavash Farzan, Worcester Polytechnic Institute (WPI)

UH 243 Room 2 - Virtual Practicum (Meeting ID: 970 1676 4583)

(3:30 - 3:55) 3D printer nozzle Capable of producing small, hollow, and tubular thermoplastic Extrusions

Ethan Wilke

Abstract: Additive manufacturing (3D printing) allows engineers and researchers to create complex and intricate geometries that would otherwise be incredibly difficult to manufacture with traditional techniques (e.g., subtractive manufacturing or injection molding). The most commonly-available form of additive manufacturing is FDM Printing (Fused Deposition Modeling). FDM printers use a material extrusion process, where thermoplastics are deposited layer by layer and parts are built from the ground up. Thermoplastics are plastics that at some temperatures become soft and flexible, and solidify when cooled (PLA, TPU, ABS, ASA, PC). Although FDM printers excel at speed, build size, and material availability, they struggle to produce high-resolution prints on a small scale. The use of layers leads to anisotropic parts that are weaker at layer lines, compromising the material’s mechanical properties. All 3D printers generate their “prints” by layering material on top of previously laid material to create the desired geometries and parts. These layers are prone to capturing air during printing and become weak points in the structure of the parts where breaks are more likely to occur. One area where current methods of 3D printing have fallen short is in the creation of small (< 2 mm Outer Diameter), thin-walled, and flexible tubular structures. The small size and hollow geometries of these structures are impossible to manufacture using current 3D printing methods on the market. FDM printers cannot produce high resolution and accurate shapes for tubes this size. The highest-resolution printers currently available on the market (SLS and SLA) can produce smaller tubes than FDM printers but are still incapable of producing tubes smaller than 3mm and the tubes that they can produce suffer from inaccurate OD and ID dimensions and inconsistencies between prints. The largest issue that affects tubes created on all current 3D printers is their anisotropic material properties from the layered construction. In order to maintain the benefits of FDM printers, (speed, build size, and material availability), while also creating small, isotropic, and high-resolution extrusions, we created a new nozzle design that can be used to retrofit any conventional FDM 3D printer. This nozzle design allows for any melted thermoplastics to be pushed into the nozzle and come out in a multi-feature extrusion (shapes with holes and/or non-circular shapes) such that the desired part can be “printed” in one consistent ‘layer”. As of this writing, we have designed, manufactured, and tested a nozzle design capable of creating tubular extrusions to produce custom-sized tubes. Due to the design utilizing existing nozzle thread specifications, any FDM 3D printer can easily be adapted by simply swapping the nozzle, and no further hardware modifications are required. Our invention opens the door to a variety of new ways to utilize already existing FDM printers. FDM printers equipped with this nozzle design can not only produce tubes that are smaller, but the resulting tubes are also isotropic in nature and therefore more closely resemble the mechanical properties of the original filament

Advisor: Professor Loris Fichera, Worcester Polytechnic Institute (WPI)

(4:00 - 4:55) Optimizing Deployment of Warehouse Robots Using Map Validator Tool

Anagha Ramaswamy

Abstract: Warehouse robotics is becoming increasingly important for businesses that seek to optimize their inventory management processes. Corvus Robotics is a leading provider of warehouse robotics solutions, offering a range of tools that help businesses track their inventory with greater efficiency. One of Corvus Robotics’ primary offerings is the use of drones for inventory tracking, which can result in faster and more accurate stock-taking. As part of my work at Corvus Robotics, I developed a few deployment tools, including a map validator tool, to ensure that the warehouse map is accurate and up-to-date, which has helped reduce errors during deployments. This tool checks the accuracy of the warehouse map, ensuring that the drone can navigate smoothly without encountering any obstacles. The development of the map validator tool and other deployment tools provides valuable insights and significant benefits such as increased operational efficiency, improved accuracy, and reduced labor costs for businesses.

Advisor: Professor Markus Nemitz, Worcester Polytechnic Institute (WPI)

(5:30 - 6:25) Online multi camera-IMU calibration

Youness Bani

Abstract: Corvus Robotics specializes in deploying indoor drones for warehouses, providing advanced inventory and inspection solutions. Accurate calibration of the camera-IMU system is crucial for optimal drone performance, as localization is a fundamental aspect. This practicum focuses on implementing a technique that quickly and effectively calibrates all drone cameras and IMU, ensuring precise localization within indoor facilities. The proposed algorithm utilizes a direct feature tracking method and an extended Kalman filter (EKF) to estimate the state of the system. Achieving optimal calibration through this approach can enhance the efficiency and performance of Corvus Robotics’ drone.

Advisors:

Professor Andre Rosendo, Worcester Polytechnic Institute (WPI)

Professor Siavash Farzan, Worcester Polytechnic Institute (WPI)

UH 150E - Virtual Practicum (Meeting ID: 955 2332 2778)

(3:30 - 4:25) Force Feedback for Obstruction Detection in Robotic Manipulation

Brian Valentino

Abstract: Many tasks within warehouse environments are being targeted for automation due to shortages in the labor pool, particularly for jobs with a high injury rate. The Boston Dynamics Stretch robot is one such automation solutions. Stretch is designed to perform manipulation tasks in the warehouse environment, and its first application is to unload cases from tractor trailers into the warehouse. As part of this manipulation task, Stretch needs to be able to estimate the dimensions of the cases that it manipulates. In scenarios where it is difficult to get an estimate of all dimension of the target case, we would like to attempt the manipulation task, but detect if the manipulation target is obstructed by collisions with the environment. In this paper we present a method for detecting these obstructions. This method uses two dynamics model of the robot-case system bases on the mass properties of the target case and the forces measured by the robot at the end effector, comparing the output of these models to determine if the target case is obstructed.

Advisor: Professor Mahdi Agheli, Worcester Polytechnic Institute (WPI)

(5:00 - 5:55) Path planning for multi UAV

Saurabh Kashid

Abstract: Path planning is important in any field of robotics depending upon the application. in this practicum I worked on path planning for building inspection. To inspect the building we are designing the path in order to cover the entire building, so we will get the RGB and thermal images of each path of the building. path is designed for multiple UAVs to reduce the time required to cover the building with static obstacle avoidance.

Advisor: Professor Chris Nycz, Worcester Polytechnic Institute (WPI)

Virtual Practicum (Meeting ID: 974 6989 0156)

(4:00 - 4:55) Infrared Point Based Localization System

Prarthana Sigedar

Abstract: Target Arm Inc. is developing an autonomous flight system called Tular for launching and recovering UAVs from moving vehicles. To ensure successful operation, the UAVs require precise localization relative to Tular, which currently relies on fiducial markers that make the product design complex. Therefore, there is a need to develop an alternate localization system based on infrared points that can be integrated into Tular’s design so as to make the system more accessible to the customers.

Advisors:

Professor Siavash Farzan, Worcester Polytechnic Institute (WPI)

Professor Nitin Sanket, Worcester Polytechnic Institute (WPI)

Virtual Directed Research (Meeting ID: 969 0157 9479)

(3:30 - 3:55) 3D Object Detection using LiDAR

Shreyansh Goyal

Abstract: The rise of deep learning has contributed to the development of the decision-making skills of autonomous vehicles on the road. The most important task for the autonomous vehicle on the road is to detect the objects around it for safe navigation. The literature already has significant 2D object detection methods but it’s important to detect the objects in 3D to have a better understanding of the surroundings and move precisely.

In autonomous driving, the most commonly used 3D sensors are the LiDAR sensors, which generate 3D point clouds to capture the 3D structures of the scenes. In some cases, LiDAR and camera both are used, and their data is fused for better understanding of the scene. Although, such multimodal networks help to improve the detection scores, but the difficulty of point cloud-based 3D object detection mainly lies in irregularity of the point clouds. We need networks which directly operate on 3D point clouds and achieve robust and accurate 3D detection performance. In this project I have worked on two such networks namely PointPillars and PointRCNN which directly operate on LiDAR point clouds, perform box regression and object classification on them. I have trained both the networks and improved their performance for different classes as compared to the original paper on KITTI dataset.

Advisors:

Professor Ximing Zhang, Worcester Polytechnic Institute (WPI)

Professor William Michalson, Worcester Polytechnic Institute (WPI)

(4:00 - 4:25) Ensemble Learning for Robot Grasping

Akshay Mahesh Laddha

Abstract: This research aims to improve the success rates of robotic grasp synthesis by combining multiple existing algorithms using an Ensemble Learning Methodology. The study trains individual experts in generative grasping and dex-net, and evaluates pre-trained models Resnet16 on the Cornell grasp dataset to achieve better grasping decisions. The proposed ensemble architecture of these experts aims to improve the diversity metrics and ultimately enhance the overall grasp success rates.

Advisor: Professor Jing Xiao, Worcester Polytechnic Institute (WPI)

(4:30 - 4:55) Semantic Segmentation for Robotic Apple Harvesting using Synthetic data in Simulation

Ghokulji Selvaraj

Abstract: Robot Harvesting of apples in orchards is a daunting task and detecting apples is a particularly important task, since it has a lot of constraints such as change in lighting, occlusion, and different variety of apples. In this paper we propose a simulation setup for testing and prototyping apple segmentation. We use the fully convolutional U-Net architecture for binary semantic segmentation. We propose to build a complete 3D simulation environment in Gazebo for apple segmentation. In addition to this we propose to create synthetic apple dataset of various varieties and configurations. We then use the generated synthetic data and train a binary semantic segmentation model to segment the apple from the simulation scene. We evaluate the trained model on a real-time Gazebo simulation scene and test the segmentation with a camera mounted on a Dual-UR5 Husky robot. An overview of the dataset is presented along with the segmentation model and baseline performance on the synthetic apple dataset.

Advisor: Professor Siavash Farzan, Worcester Polytechnic Institute (WPI)

(5:00 - 5:25) Dexterous In-Hand Manipulation

Team Members: Kunal Gajanan Nandanwar and Anujay Sharma

Abstract: This work proposes a novel approach to address the challenging task of manipulating objects by hand without having to re-grasp them or replace them on the surface. Despite recent advances in deep learning and reinforcement learning, imparting the same generalized skills to robots remains difficult. We created a graph-based planner in 3 dimensions on symmetric geometrical objects, which also formed a solid foundation for irregularly shaped objects. This approach shows promise for advancing the field of object manipulation, which is still in its nascent stages.

Advisor: Professor Berk Calli, Worcester Polytechnic Institute (WPI)

(5:30 - 5:55) Benchmarking of Robotic Grasping Algorithms

Team Members: Kshitij Sharma

Abstract: Robotic grasping has attracted significant attention in recent years due to its wide range of potential applications in industry, healthcare, and service sectors but the robotics community is lacking the tools to assess the performance of

the manipulation algorithm and draw meaningful comparisons. In this paper, I present a comprehensive benchmarking study of the ros\_deep\_grasp algorithm, which is based on the ResNet50 architecture, against an existing benchmarking protocol for robotic grasping algorithms. Our evaluation employs YCB benchmarking datasets to assess the performance, robustness, and generalizability of the ros\_deep\_grasp algorithm. The research introduced a new approach to evaluate the algorithm, which divides each experimental result into true positives, true negatives, false positives, and false negatives for every object in the YCB dataset to evaluate the algorithm’s performance. The purpose of this innovative evaluation method is to provide a more thorough and unbiased assessment of the algorithm’s abilities in recognizing and manipulating objects. The performance of the ros\_deep\_grasp algorithm on the benchmarking protocol is ultimately demonstrated through several evaluation methods, as indicated by the results. These methods were employed to comprehensively assess the algorithm’s effectiveness and provide a detailed understanding of its capabilities.

Advisor: Professor Berk Calli, Worcester Polytechnic Institute (WPI)

(6:00 - 6:25) Adaptive Jacobians for Visual Servoing of Planar Manipulators

Winston Crosby

Abstract: This project presents an investigation of adaptive visual servoing control methods for a planar robotic manipulator. The goal of visual servoing is to control the motion of a robot based on visual feedback from a camera system. Two separate control methods were explored as well as two alternative visual tracking methods. The presented work contributes to the development of more reliable and efficient robotic systems.

Advisor: Professor Berk Calli, Worcester Polytechnic Institute (WPI)