An interdisciplinary team of researchers at Worcester Polytechnic Institute (WPI) has received a four-year, $1.6 million award from the National Institutes of Health (NIH) to develop a smartphone app that will allow patients and their caregivers to track and assess chronic wounds, helping lower costs associated with frequent doctor and hospital visits, and catching serious complications before they lead to expensive hospitalizations and life-altering amputations.

Emmanuel Agu, associate professor of computer science and coordinator of WPI’s Mobile Graphics Research Group, is the principal investigator for the project. Co-principal investigators include Diane Strong, a professor in WPI’s Foisie Business School, Bengisu Tulu, an associate professor in the Foisie Business School, and Peder Pedersen, a retired professor of electrical and computer engineering.

The app, which is in the early development stage, is being designed to be used by patients and caregivers, including visiting nurses, who need to regularly check the status of potentially dangerous wounds in the home environment. It will make patients more involved in their own care, cut down on unnecessary doctor visits, and issue alerts when an emergency trip to the doctor or hospital is necessary, Agu said.

A 2017 study found that chronic, nonhealing wounds affect 5.7 million people in the United States, or about 2 percent of the population, at an annual cost of $20 billion. The cost of just transporting chronic wound patients to medical visits is estimated at about $200 million per year.

“This is a big problem,” said Agu. “Wounds, wound management, and amputations have a huge cost, both financially and physically, for the people who suffer from them, as well as for their families. I like to work on real problems that make a difference for people. Much of my research is in imaging and computer graphics. Wound management is a problem that imaging technology can help with.”

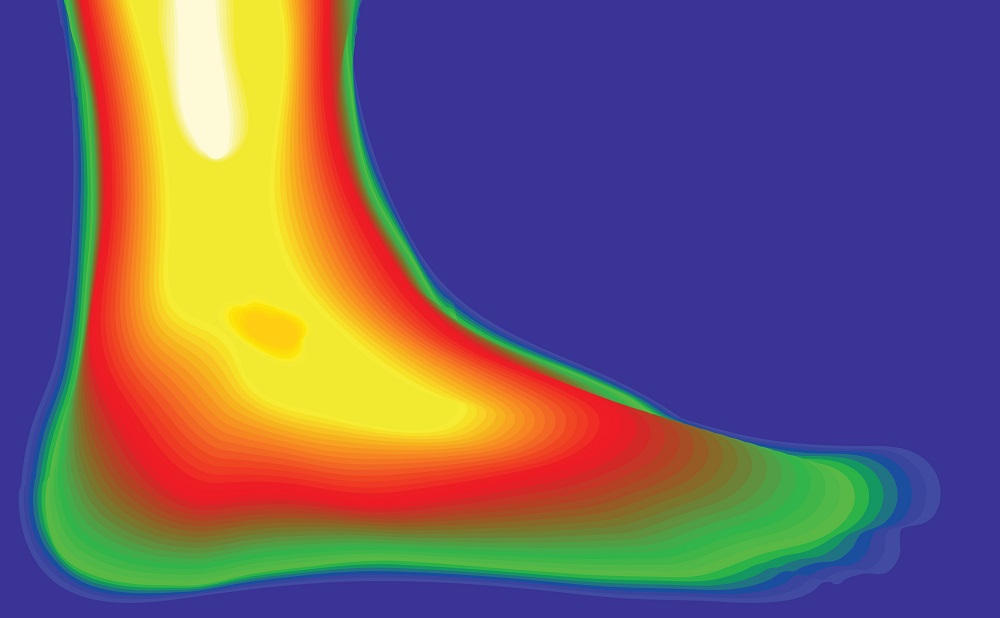

Patients or their caregivers will use the app to photograph chronic wounds with a smartphone. Machine learning algorithms built into the app will measure wound assessment metrics, including size, depth, and color, which indicate how the wound healing is progressing. The algorithms will compare the readings over time to determine if the wound is shrinking or expanding, or if there are other changes, like darkening tissue, that could indicate a growing infection or other complication. The app will also compute a healing score that tells the patient whether the wound is getting better, is unchanged, or is worsening. Finally, the app will suggest one of three actions: stay the course, consult a wound specialist for advice regarding treatment, or seek immediate care.

The new wound app is an evolution of work Agu and his research team completed for Sugar, an app designed to help people with diabetes track and manage their weight and blood sugar levels, and also photograph and assess the status of any chronic foot ulcers. In the current research, Agu will build on the wound assessment component of Sugar, which was developed with a $1.2 million grant awarded in 2011 by the National Science Foundation (NSF). And while Sugar focused exclusively on diabetic wounds on the feet and legs, the new app is expected to one day be expanded to assess a broader array of chronic wounds, including arterial, venous, and pressure ulcers, also known as bed sores.

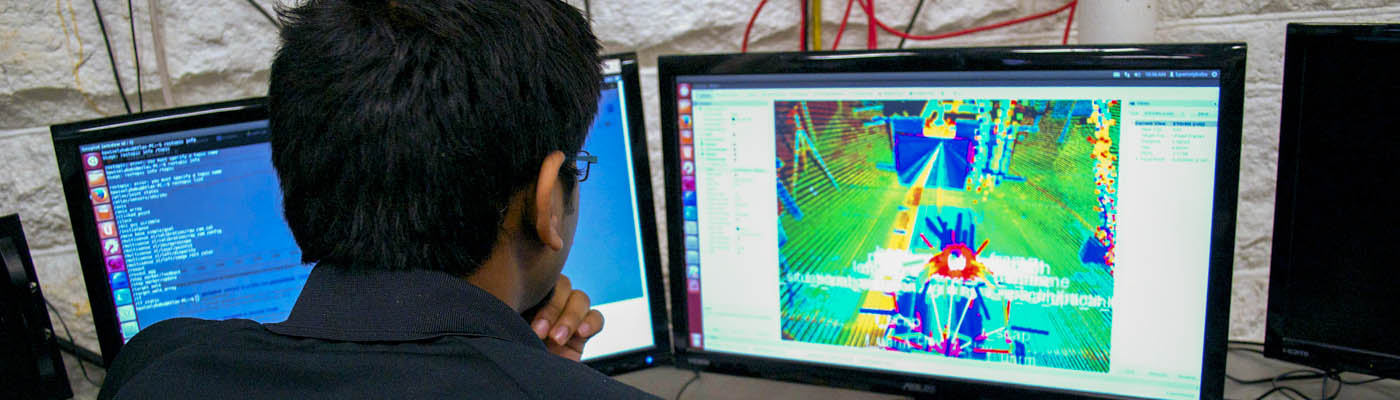

Agu, Pedersen, and Clifford Lindsay, assistant professor of radiology at University of Massachusetts Medical School, will lead an effort to address one of the key technical challenges of the new project: processing imperfect smartphone photos taken by amateurs. Agu said his team understands that patients are likely to take photos from too far away or too close up, from an angle, or under poor lighting. He said shadows are particularly problematic for wound analysis, because they affect how the computer vision algorithm perceives the wound’s colors and depth.

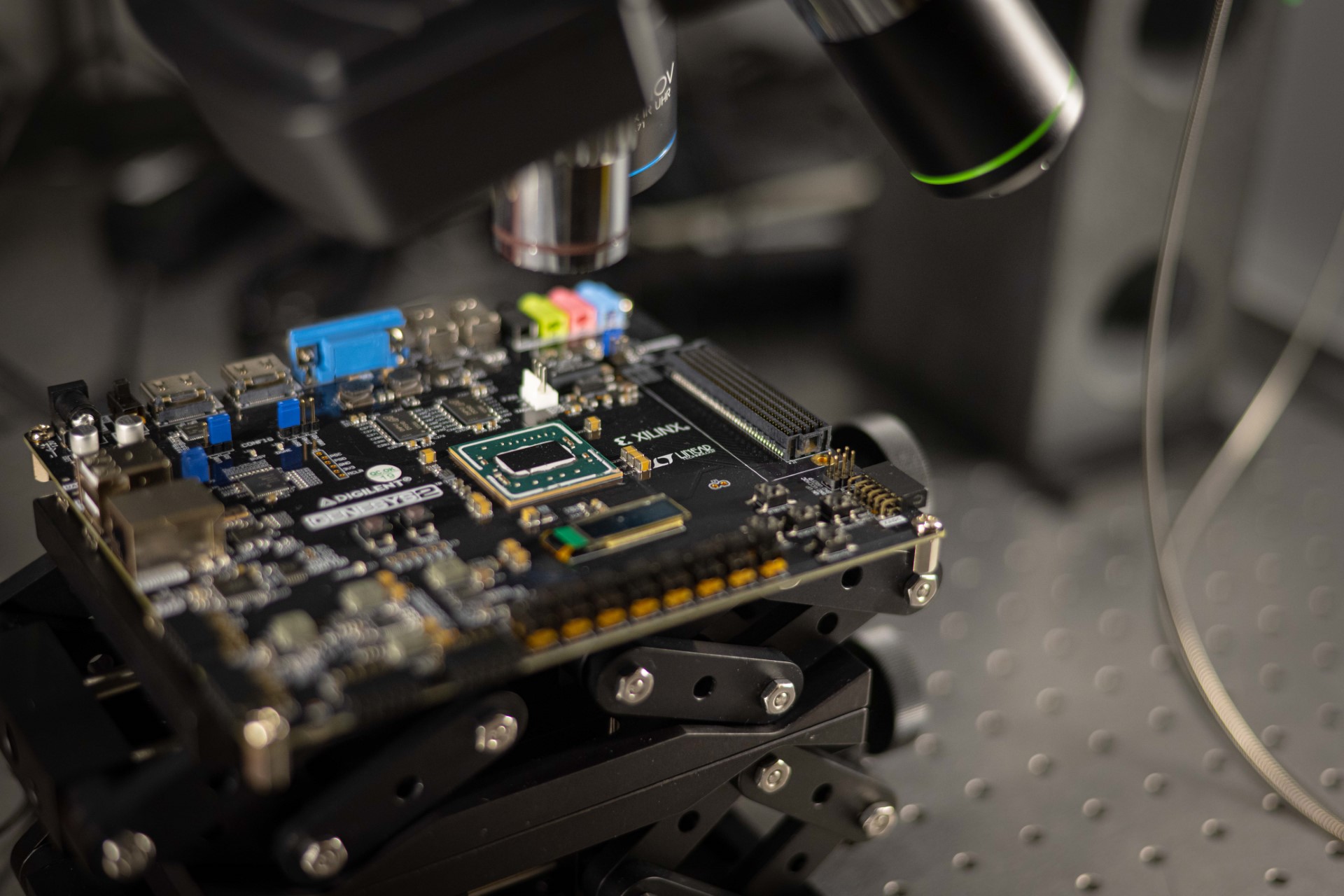

To address this problem, the team will develop algorithms similar to those used in facial recognition software that can transform each image so it appears as if it was taken straight on, at the proper distance, and under ideal lighting conditions. They will use techniques from an area of computer science called computational photography. “Computational photography has been applied to natural images, like landscapes, but not in the medical domain,” Agu said. “This is cutting-edge research that we believe will produce a good solution to this problem.”

In addition to fixing the images, the research team will need to train their algorithms to properly assess and interpret them, work that will draw on the decision support expertise of Strong and Tulu. The team will expose their algorithms to hundreds of images of actual wounds taken, with patient consent, by Lindsay. Raymond Dunn ‘78, chief of plastic and reconstructive surgery at UMass Memorial, is a consultant to the project.

Agu and the team will feed the photos, along with wound care specialists’ expert assessments of the wounds and what kind of treatments they require, into the machine learning system so the app will “learn” how to analyze the wounds and calculate what care advice to give. Agu’s team will also test the algorithms on realistic simulated wounds they create with special 3D printers.

“This won’t replace doctor visits entirely, but it will augment those visits,” said Agu. “Patients or caregivers can check in anytime they want using this app and get more feedback than they do with occasional doctor visits. If people self-monitor, they are more likely to change their behavior, keep a closer eye on their wounds, and take the proper care those dangerous wounds need.”

The wound app is being developed on the Android platform, but Agu expects it ultimately to be adapted for the iPhone platform as well.